Our Work

Resources

OLAB has access to industrial‑scale GPU computing through our partnership with NVIDIA and high‑performance infrastructure from HPE. Below is a summary of our core compute instances used across training and evaluation.

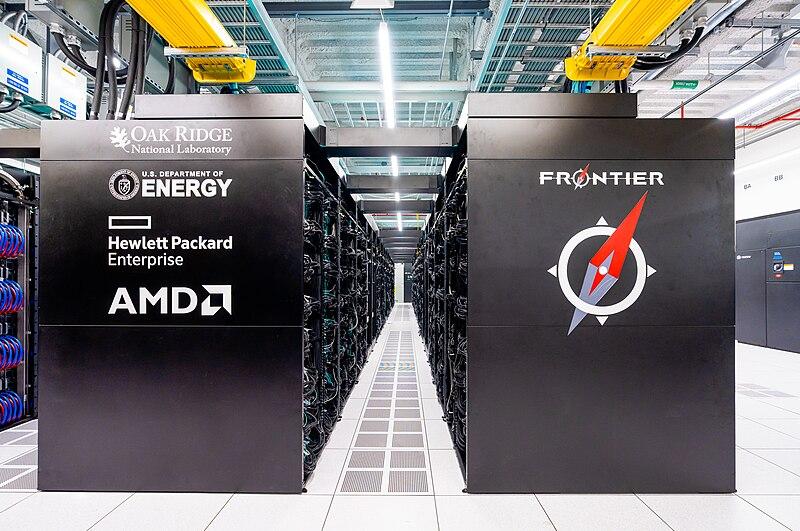

Systems gallery

Representative system photos from NVIDIA and HPE.

A100 nodes

- • 8× A100‑40GB

- • 2× AMD EPYC 7742 (64 cores) @ 2.25 GHz

- • 1 TB RAM

- • 96 TB local SSD

GH nodes

- • 2× AMD EPYC 7742 (64 cores) @ 2.25 GHz

- • 480 GB RAM

- • 96 TB local SSD

H100 nodes

- • 2× Intel Xeon Platinum 8480C (112 cores total)

- • 2 TB RAM

- • 30 TB NVMe SSD

Notes

- • Resources are used across NeuroAI and Medical AI projects, with scheduling for long‑running training jobs and interactive experiments.

- • Access is coordinated through OLAB; contact us for collaboration inquiries.